For all their progress, computers are still pretty unimpressive. Sure, they can pilot aircraft and simulate nuclear reactors. But even our best machines struggle with tasks that we humans find easy, like controlling limbs and parsing the meaning of this paragraph.

It’s a little sobering, actually. The average human brain packs a hundred billion or so neurons—connected by a quadrillion (1015) constantly changing synapses—into a space the size of a cantaloupe. It consumes a paltry 20 watts, much less than a typical incandescent lightbulb. But simulating this mess of wetware with traditional digital circuits would require a supercomputer that’s a good 1000 times as powerful as the best ones we have available today. And we’d need the output of an entire nuclear power plant to run it.

Closing this computational gap is important for a couple of reasons. First, it can help us understand how the brain works and how it breaks down. There is only so much to learn on the coarse level, from imagers that show how the brain lights up when we remember a joke or tell a lie, and on the fine level, from laboratory studies of the basic biology of neurons and their wirelike dendrites and axons. All the real action happens at the intermediate level, where millions of networked neurons work in concert to produce behaviors you couldn’t possibly predict by watching a handful of neurons fire. To make progress in this area you need computational muscle.

And second, it’s quite likely that finding ways to mimic the brain could pave the way to a host of ultraspeedy, energy-efficient chips. By solving this grandest of all computational challenges, we may well learn how to handle many other difficult tasks, such as pattern recognition and robot autonomy.

Fortunately, we don’t have to rely on traditional, power-hungry computers to get us there. Scattered around the world are at least half a dozen projects dedicated to building brain models using specialized analog circuits. Unlike the digital circuits in traditional computers, which could take weeks or even months to model a single second of brain operation, these analog circuits can model brain activity as fast as or even faster than it really occurs, and they consume a fraction of the power. But analog chips do have one serious drawback—they aren’t very programmable. The equations used to model the brain in an analog circuit are physically hardwired in a way that affects every detail of the design, right down to the placement of every analog adder and multiplier. This makes it hard to overhaul the model, something we’d have to do again and again because we still don’t know what level of biological detail we’ll need in order to mimic the way brains behave.

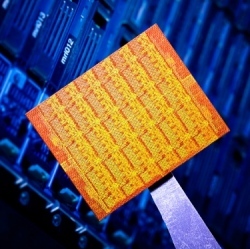

To help things along, my colleagues and I are building something a bit different: the first low-power, large-scale digital model of the brain. Dubbed SpiNNaker, for Spiking Neural Network Architecture, our machine looks a lot like a conventional parallel computer, but it boasts some significant changes to the way chips communicate. We expect it will let us model brain activity with speeds matching those of biological systems but with all the flexibility of a supercomputer.

Over the next year and half, we will create SpiNNaker by connecting more than a million ARM processors, the same kind of basic, energy-efficient chips that ship in most of today’s mobile phones. When it’s finished, SpiNNaker will be able to simulate the behavior of 1 billion neurons. That’s just 1 percent as many as are in a human brain but more than 10 times as many as are in the brain of one of neuroscience’s most popular test subjects, the mouse. With any luck, the machine will help show how our brains do all the incredible things that they do, providing insights into brain diseases and ideas for how to treat them. It should also accelerate progress toward a promising new way of computing.